A couple of weeks ago I attended SREcon EMEA in Amsterdam. Here’s some sparse thoughts about it, with no pretense of being exhaustive or coherent.

Looking Back

There are only a handful of conferences I’ve attended where I felt “at home”. Going back in time, Surge was the first one, then came Velocity. I’m adding SREcon to that list. It definitely felt like I was among people that speak the same language and have similar breadth and depth of expertise, and yet it is somewhat strange at the same time.

As I see it, there’s at least three “tiers” for such a big and niche conference. The FAANG folks, the tiny company with a sysadmin or devops or two, and then the big ocean of mid-sized companies, where people like us are. Our SRE team is four people and we manage a service with millions of monthly users. Needless to say, we have a lot on our plate :-)

I came to SREcon after a hiatus from conferences for some years. After a while, conferences tend to become self-referential and people start talking about the same things over and over again. I wanted to understand how things had changed in our field, what were people talking the most about, get some fresh perspectives and perhaps connect with people from other companies. What prompted me to do this was Niall Murphy tearing the SRE bible book apart.

The Question of SRE Identity

This year’s conference topic was “What could SRE be?”.

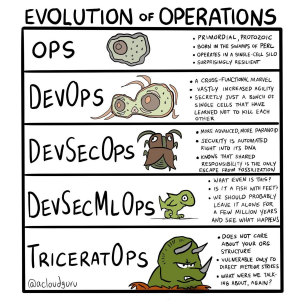

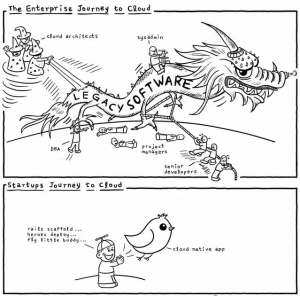

No surprise, then, that a good portion of the talks were about what I refer to as the question of identity for SREs. We have seen the same happen — and a lot more during all these years — for the DevOps movement.

What could SRE be, then? According to some presentations, one would conclude that whatever SRE is, it’s no longer what Google intended, it’s not what anyone else thinks it is either, it’s just what you think it is: a subjectivist view.

Among the Usenix slack conversations, there was a lot of chit-chat about SRE identity. My personal contribution was the following meme:

Other funny memes that were shared:

Other funny memes that were shared:

|

|

|

An interesting fact I learned during the conference is that the Google SRE book was written by assembling contributions from the best teams at Google, picking out their respective best practices. Paradoxically, this implies that the SRE book is not representative of how even Google itself does SRE. If you also consider that, at the time the SRE book was published (2016), Google employed about 1,200 people in the various SRE teams, the only possible conclusion is… if you are not Google, there is likely very little that you can apply to your everyday mere-mortal-SRE life.

Before you think I’m exaggerating, such conclusion was claimed by (ex-)Google engineers themselves, for example in Alex Hidalgo’s “Diamonds under Pressure” talk and (in my opinion) in one of the best talks of the conference, Emil Stolarsky’s Unified Theory of SRE. Another entertaining presentation in the same vein was Andrew Clay Shafer’s “SRE as She Is Spoke”. Andrew expressed this thesis that “progress [on the SRE journey] stops when the needs are met”, which seems a reasonable and pragmatic approach.

The videos are not up yet, but they should be in a few weeks.

Alongside to the “subjectivist” view, there were other talks, which could be classified as systems thinking, that focused on the more general and broad aspects of what SREs do, how to handle complex systems, human factors, etc… Among the best IMO were:

- The first plenary session on Day 1, “Knowledge and Power: A Sociotechnical Systems Discussion on the Future of SRE” by Laura Maguire and Lorin Hochstein. I’ve been following Lorin’s excellent blog, Surfing Complexity for some years now.

- Laura Nolan’s “What SRE Could Be: Systems Reliability Engineering”. Lots of material to go deeper into systems thinking. This was brilliant, I think Laura raised the level of the conversation with this talk.

What else?

The question of SRE identity accounted for a notable part of the talks, but thankfully not all. It’s good to pause and reflect on our role, but personally that’s not why I was interested in SREcon, not primarily at least. What I like are the deep technical talks, where I get to know more about how other companies actually do the stuff we call SRE. Given my past conference experience, I expected Facebook/Meta’s talk to be somewhat disappointing, and it was. While some details of how Meta is structured were shared, and are always interesting, I expected a bit more on how the incident actually happened.

I loved Effie Mouzeli’s talk on how to make teams resilient, “Is Our Team as Resilient as Our Systems?”. We naturally focus on systems, but teams are a crucial part of the equation. My team and I have had to work on this a lot in the past years, and I’m hoping to share more about this soon. I felt this talk had a lot of good insights, some of which we’ve also applied over time.

Another talk that deserves a mention is Chris Sinjakli’s reflection on broadening the scope of how we work on reliability for our systems. This is sometimes difficult to do when toil is a big part of our jobs. Luckily it’s not for our team, not anymore at least, so this talk felt very relevant to me, and I recommend it.

I couldn’t attend some of the talks due to the two parallel tracks. I hope to catch-up when slides and videos will be published later on.

What about the hallway track?

In general, people say that conferences are most useful because of the casual conversations you can have in the hallways. While I do agree with it, the opportunities to have conversations vary depending on the type of person you are, and the people you meet, of course. My impression is that while some people at SREcon were happy to have conversations, most were likewise happy to be left alone, which is fair enough :-)

Just to say that it was really nice to meet people and chat, and almost all I talked to knew Kahoot! directly and were happy to share details about what they’re doing and equally interested in what we’re doing.

In some of these conversations I’ve been trying to motion for more concrete, down to earth, talks on how smaller companies like ours do SRE. It’s ok to aspire or be interested in how Google runs, but you come away with absolutely zero information that’s useful to your work life. Possibly there’s a downside even: people going home thinking they have to do whatever Google does (see chapters above) so ultimately… let’s give less importance to the Googles of the world, please!

Besides the hallway track, there was a nice “sidewalk” track. We walked around the city, 15 km a day on average — you gotta track those SLOs… — and I also managed to snap some nice pictures of Amsterdam at sunrise and sunset.

The Venue and Organization

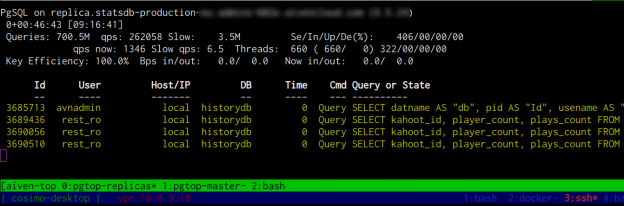

Loved all of it, honestly the best conference I’ve ever been to. The venue was spectacular, there was plenty of space, slides were clearly visible on screen, and the food was awesome! We also used one of the available meeting rooms to participate in our own company hackaton after the conference finished, until they kicked us out. Here’s a sneak peek of what our team was working on:

I hope to return to SREcon next year in Dublin. By then, I’d love to see more not-Google, not-Meta, etc… talks on the program. Perhaps we (or you!) should think about presenting too, why not?