Most deep learning or machine learning (ML) articles and tutorials focus on how to build, train and evaluate a model. The model deployment stage is rarely covered in detail, even though it is just as important if not fundamental part of a ML system. In other words, how do we take a working ML model from a jupyter notebook to a production ML-powered API?

I hope more and more practitioners will cover the deployment aspect of ML models. For now, I can offer my own experience about how I approached this problem, hoping this will be useful to some of you out there.

Creating a useful ML model

How to create a useful ML model is the part of the work I won’t cover in this post. :-)

I assume that you already have:

- a model or pipeline that is either pre-trained or that you have trained yourself

- a model based on PyTorch, though most of the information here will probably help with any ML framework

- some idea on how to make your model available as a RESTful API

First step: defining a simple API

The rest of this article will use Python as a programming language, for various reasons, the most important being that the ML model is based on PyTorch. In my specific case, the problem I worked on was text clustering.

Given a set of sentences, the API should output a list of clusters. A cluster is a group of sentences that have a similar meaning, or as similar as possible. This task is usually referred to with the term “semantic similarity”.

Here’s an example. Given the sentences:

- “Dog Walking: 10 Simple Steps”

- “The Secrets of Dog Walking”

- “Why You Need To Dog Walking”

- “The Art of Dog Walking”

- “The Joy of Dog Walking”

- “Public Speaking For The Modern Age”,

- “Learn The Art of Public Speaking”

- “Master The Art of Public Speaking”

- “The Best Way To Public Speaking”

The API should return the following clusters:

- Cluster 1 = (“Dog Walking: 10 Simple Steps”, “The Secrets of Dog Walking”, “Why You Need To Dog Walking”, “The Art of Dog Walking”, “The Joy of Dog Walking”)

- Cluster 2 = (“Public Speaking For The Modern Age”, “Learn The Art of Public Speaking”, “Master The Art of Public Speaking”, “The Best Way To Public Speaking”)

The model

I plan to describe the details of the specific model and algorithm I used in a future post. For now, the important aspect is that this model can be loaded in memory with some function we define as follows:

model = get_model()

This model will likely be a very large in-memory object. We only want to load it once in our backend process and use it throughout the request lifecycle, possibly for more than just one request. A typical model will take a long time to load. Ten seconds or more is not unheard of, and we can’t afford to load it for every request. It would make our service terribly slow and unusable.

A simple Python backend module

Last year I discovered FastAPI, and I immediately liked it. It’s easy to use, intuitive and yet flexible. It allowed me to quickly build up every aspect of my service, including its documentation, auto-generated from the code.

FastAPI provides a well-structured base to build upon, whether you are just starting with Python or you are already an expert. It encourages use of type hints and model classes for each request and response. Even if you have no idea what these are, just follow along FastAPI’s good defaults and you will likely find this way of working quite neat.

Let’s build our service from scratch. I usually start from a python virtualenv, an isolated python environment where you can install your dependencies.

virtualenv --python /usr/bin/python3.8 .venv

source .venv/bin/activate

If you are not familiar with virtualenv, there are many tutorials you can read online.

Next step, we write our requirements file, with all the python modules we need to run our project. Here’s an example:

# --- requirements.txt

fastapi~=0.61.1

Save the file as requirements.txt. You can install the modules with pip. There are plenty of guides on how to get pip on your system if you don’t have it:

pip install -r requirements.txt

Doing so will install FastAPI. Let’s create our backend now. Copy the following skeleton API into a main.py file. If you prefer, you can clone the FastAPI template published at https://github.com/cosimo/fastapi-ml-api:

from typing import Optional

from fastapi import FastAPI

app = FastAPI()

model = get_model()

@app.post("/cluster")

def cluster():

return {"Hello": "World"}

You can run this service with:

uvicorn main:app --reload

You’ll notice right away that any changes to the code will trigger a reload of the server: if you are using the production ML model, the model own load time will quickly become a nuisance. I haven’t managed to solve this problem yet. One approach I could see working is to either mock the model results if possible, or use a lighter model for development.

Invoking uvicorn in this way is recommended for development. For production deployments, FastAPI’s docs recommend using gunicorn with the uvicorn workers. I haven’t looked into other options in depth. There might be better ways to deploy a production service. For now this has proven to be reliable for my needs. I did have to tweak gunicorn’s configuration to my specific case.

Running our service with gunicorn

The gunicorn start command looks like the following:

gunicorn -c gunicorn_conf.py -k uvicorn.workers.UvicornWorker --preload main:app

Note the arguments to gunicorn:

-k tells gunicorn to use a specific worker classmain:app instructs gunicorn to load the main module and use app (in this case the FastAPI instance) as the application code that all workers should be running--preload causes gunicorn to change the worker startup procedure

Preloading our application

Normally gunicorn would create a number of workers, and then have each worker load the application code. The --preload option inverts the sequence of operations by loading the application instance first and then forking all worker processes. Because of how fork() works, each worker process will be a copy of the main gunicorn process and will share (part of) the same memory space.

Making our ML model part of the FastAPI application (or making our model load when the FastAPI application is first created) will cause our model variable to be “shared” across all processes!

The effect of this change is massive. If our model, once loaded into memory, occupies 1 Gb of RAM, and we want to run 4 gunicorn workers, the net gain is 3 Gb of memory that we will have available for other uses. In a container-based deployment, it is especially important to keep the memory usage low. Reclaiming 75% of the total memory that would otherwise be used is an excellent result.

I don’t know enough details about PyTorch models or Python itself to understand how this sharing keeps being valid across the process lifetime. I believe that modifying the model in any way will cause copy-on-write operations and ultimately the model variable to be copied in each process memory space.

Complications

Turns out we don’t get this advantage for free. There are a few complications with having a PyTorch model shared across different processes. The PyTorch documentation covers them in detail, even though I’m not sure I did in fact understand all of it.

In my project I tried several approaches, without success:

- use

pytorch.multiprocessing in the gunicorn configuration module

- modify

gunicorn itself (!) to use pytorch.multiprocessing to load the model. I did it just as a prototype, but even then… bad idea

- investigate alternative worker models instead of prefork. I don’t remember the results of this investigation, but they must have been unsuccessful

- use

/dev/shm (Linux shared memory tmpfs) as a filesystem where to store the Pytorch model file

A Solution?

The approach I ended up using is the following.

gunicorn must create the FastAPI application to start it, so I loaded the model (as a global) when creating the FastAPI application, and verified the model was loaded before that, and only loaded once.

I added the preload_app = True option to gunicorn’s configuration module.

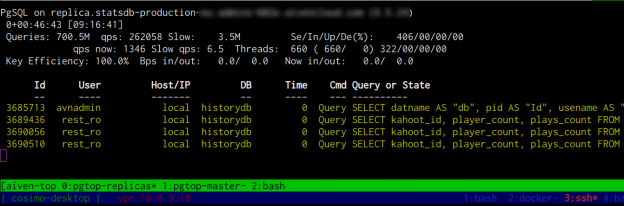

I limited the amount of workers (my tests showed 3 to work best for my use case), and limited the amount of requests each gunicorn worker will serve. I used max_requests = 50. I limited the amount of requests because I noticed a sudden increase in memory usage in each worker regularly some minutes after startup. I couldn’t trace it back to something specific, so I used this dirty workaround.

Another tweak was to allow the gunicorn workers to start up in a longer than default time, otherwise they would be killed and respawned by gunicorn’s own watchdog as they were taking too long to load the ML model on startup. I used a timeout of 60 seconds instead of the default 30.

The most difficult problem to troubleshoot was workers suddenly stopping and not serving any more requests after a short while. I solved that by not using `async` on my FastAPI application methods. Other people have reported this solution not working for them… This remains to be understood.

Lastly, when loading the Pytorch model, I used the .eval() and .share_memory() methods on it, before returning it to the FastAPI application. This is happening just on first load.

For example, this is how my model loading looks like:

def load_language_model() -> SentenceTransformer:

language_model = SentenceTransformer(SOME_MODEL_NAME)

language_model.eval()

language_model.share_memory()

return language_model

The value returned by this method is assigned to a global loaded before the FastAPI application instance is created.

I doubt this is the way to do things, but I did not find any clear guide on how to do this. Information about deploying production models seems quite scarce, if you remember the premise to this post.

In summary:

preload_app = True- Load the ML model before the FastAPI (or wsgi) application is created

- Use

.eval() and .share_memory() if your model is PyTorch-based

- Limit the amount of workers/requests

- Increase the worker start timeout period

Read on for other tips about dockerization of all this. But first…

Gunicorn configuration

Here’s more or less all the customizations needed for the gunicorn configuration:

# Preload the FastAPI application, so we can load the PyTorch model

# in the parent gunicorn process and share its memory with all the workers

preload_app = True

# Limit the amount of requests a single worker will handle, so as to

# curtail the increase in memory usage of each worker process

max_requests = 50

Bundling model and application in a Docker container

Your choice of deployment target might be different. What I used for our production environment is a Dockerfile. It’s easily applicable as a development option but also good for production in case you deploy to a platform like Kubernetes like I did.

Initially I tried to build a Dockerfile with everything I needed. I kept the PyTorch model file as binary in the git repository. The binary was larger than 500Mb, and that required the use of git-lfs at least for Github repositories. I found that to be a problem when trying to build Docker containers from Github Actions. I couldn’t easily reconstruct the git-lfs objects at build time. Another shortcoming of this approach is that the large model file makes the docker container context huge, increasing build times.

Two stage Docker build

In cases like this, splitting the Docker build in two stages can help. I decided to bundle the large model binary into a first stage Docker container, and then build up my application layer on top as stage two.

Here’s how it works in practice:

# --- Dockerfile.stage1

# https://github.com/tiangolo/uvicorn-gunicorn-fastapi-docker

FROM tiangolo/uvicorn-gunicorn-fastapi:python3.8

# Install PyTorch CPU version

# https://pytorch.org/get-started/locally/#linux-pip

RUN pip3 install torch==1.7.0+cpu torchvision==0.8.1+cpu torchaudio==0.7.0 -f https://download.pytorch.org/whl/torch_stable.html

# Here I'm using sentence_transformers, but you can use any library you need

# and make it download the model you plan using, or just copy/download it

# as appropriate. The resulting docker image should have the model bundled.

RUN pip3 install sentence_transformers==0.3.8

RUN python -c 'from sentence_transformers import SentenceTransformer; model = SentenceTransformer("")'

Build and push this container image to your docker container registry as stage1 tag.

After that, you can build your stage2 docker image starting from the stage1 image.

# --- Dockerfile

FROM $(REGISTRY)/$(PROJECT):stage1

# Gunicorn config uses these env variables by default

ENV LOG_LEVEL=info

ENV MAX_WORKERS=3

ENV PORT=8000

# Give the workers enough time to load the language model (30s is not enough)

ENV TIMEOUT=60

# Install all the other required python dependencies

COPY ./requirements.txt /app

RUN pip3 install -r /app/requirements.txt

COPY ./config/gunicorn_conf.py /gunicorn_conf.py

COPY ./src /app

# COPY ./tests /tests

You may need to increase the runtime shared memory to be able to load the ML model in a preload scenario.

If that’s the case, or if you get errors on model load when running your project in Docker or Kubernetes, you need to run docker with --shm-size=1.75G for example, or any suitable amount of memory for your own model, as in:

docker run --shm-size=1.75G --rm <command>

The equivalent directive for a helm chart to deploy in Kubernetes is (WARNING: POSSIBLY MANGLED YAML AHEAD):

apiVersion: apps/v1

kind: Deployment

metadata:

...

spec:

...

template:

...

spec:

volumes:

- name: modelsharedmem

emptyDir:

sizeLimit: "1750Mi"

medium: "Memory"

containers:

- name: {{ .Chart.Name }}

...

volumeMounts:

- name: modelsharedmem

mountPath: /dev/shm

...

A Makefile to bind it all together

I like to add a Makefile to my projects, to create a memory of the commands needed to start a server, run tests or build containers. I don’t need to use brain power to memorize any of that, and it’s easy for colleagues to understand what commands are used for which purpose.

Here’s my sample Makefile:

# --- Makefile

PROJECT=myproject

BRANCH=main

REGISTRY=your.docker.registry/project

.PHONY: docker docker-push start test

start:

./scripts/start.sh

# Stage 1 image is used to avoid downloading 2 Gb of PyTorch + nlp models

# every time we build our container

docker-stage1:

docker build -t $(REGISTRY)/$(PROJECT):stage1 -f Dockerfile.stage1 .

docker push $(REGISTRY)/$(PROJECT):stage1

docker:

docker build -t $(REGISTRY)/$(PROJECT):$(BRANCH) .

docker-push:

docker push $(REGISTRY)/$(PROJECT):$(BRANCH)

test:

JSON_LOGS=False ./scripts/test.sh

Other observations

I had initially opted for Python 3.7, but I tried upgrading to Python 3.8 because of a comment on a related FastAPI issue on Github, and in my tests I found that Python 3.8 uses slightly less memory than Python 3.7 over time.

See also

I published a sample repository to get started with a project like the one I just described: https://github.com/cosimo/fastapi-ml-api.

And these are the links to issues I either followed or commented on while researching my solutions:

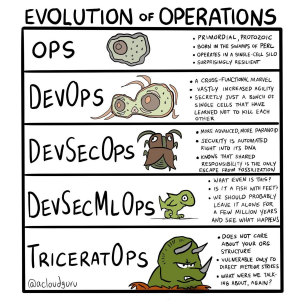

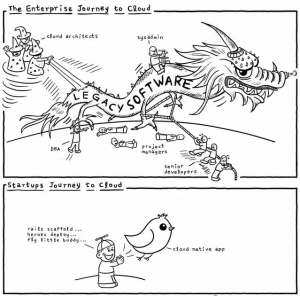

Other funny memes that were shared:

Other funny memes that were shared: